Early attempts at artificial intelligence included efforts to play games, like chess. This type of AI is known as narrow or weak AI. Because weak AI has a specific focus, it has been likened to a one-trick pony. Multimodal artificial intelligence (sometime called multimodal machine learning) expands the focus of AI systems. Louis-Philippe Morency, an Assistant Professor at Carnegie Mellon University, and Tadas Baltrušaitis, a Senior Scientist at Microsoft, Computer Vision, explain, “Multimodal machine learning is a vibrant multi-disciplinary research field which addresses some of the original goals of artificial intelligence by integrating and modeling multiple communicative modalities, including linguistic, acoustic and visual messages. With the initial research on audio-visual speech recognition and more recently with image and video captioning projects, this research field brings some unique challenges for multimodal researchers given the heterogeneity of the data and the contingency often found between modalities.”[1]

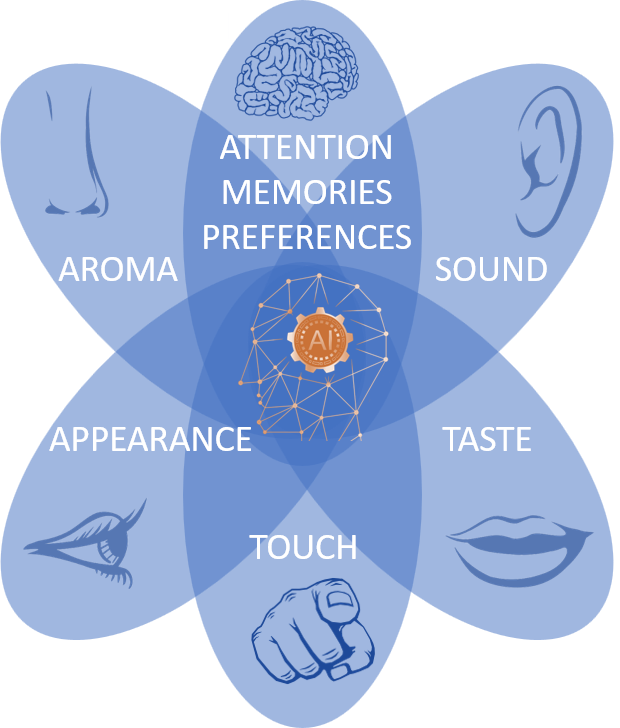

Morency and Baltrušaitis go on to explain that a modality is “the way in which something happens or is experienced. Modality refers to a certain type of information and/or the representation format in which information is stored.” A “sensory modality” involves “one of the primary forms of sensation, such as vision or touch” or a “channel of communication.” A few examples of modalities are natural language (spoken or written); visual, auditory (e.g., voice, sounds, music); haptics; smell; and physiological signals. They explain that beginning around a decade ago computer scientists started working with representation learning (aka deep learning) and multi-modal artificial intelligence. Enabling this research were new, large-scale datasets, faster computers and graphics processing units (GPUs), high-level visual features, and “dimensional” linguistic features.

Pragati Baheti, an SDE’20 intern at Microsoft, notes that deep learning has made remarkable strides in single-mode applications. She writes, “Deep neural networks have been successfully applied to unsupervised feature learning for single modalities — e.g., text, images or audio.”[2] One such system receiving a lot of hype is OpenAI’s GPT-3 (i.e., the third generation of “Generative Pre-Training” or “Generative Pre-training Transformers”). GPT-3 is primarily a language model which, when given an input text, is trained to predict the next word or words. It’s Natural Language Processing (NLP) performance is impressive. Many computer scientists believe the only way cognitive technologies will increase their understanding of the world is by helping them experience data in a multimodal way. As discussed below, there have been advancements in multimodal AI computing — and breakthroughs in China means they are ahead in this important technology sector.

Why Multi-Modal AI is Important

As humans, we interpret the world around us using all our senses. Baheti explains, “Our experience of the world is multimodal — we see objects, hear sounds, feel the texture, smell odors and taste flavors and then come up to a decision. Multimodal learning suggests that when a number of our senses — visual, auditory, kinesthetic — are being engaged in the processing of information, we understand and remember more. By combining these modes, learners can combine information from different sources.”[2] AI is still trying to understand the world using multimodal techniques. Baheti notes, “Working with multimodal data not only improves neural networks, but it also includes better feature extraction from all sources that thereby contribute to making predictions at a larger scale.”

Technology journalist Kyle Wiggers (@Kyle_L_Wiggers) explains, “Unlike most AI systems, humans understand the meaning of text, videos, audio, and images together in context. For example, given text and an image that seem innocuous when considered apart (e.g., ‘Look how many people love you’ and a picture of a barren desert), people recognize that these elements take on potentially hurtful connotations when they’re paired or juxtaposed. While systems capable of making these multimodal inferences remain beyond reach, there’s been progress. New research has advanced the state-of-the-art in multimodal learning, particularly in the subfield of visual question answering (VQA), a computer vision task where a system is given a text-based question about an image and must infer the answer. As it turns out, multimodal learning can carry complementary information or trends, which often only become evident when they’re all included in the learning process. And this holds promise for applications from captioning to translating comic books into different languages.”[3]

Translating comic books into different languages may not seem very important; however, expanding NLP to other languages is important. Sebastian Ruder (@seb_ruder) a Research scientist at DeepMind, notes, “Natural language processing research predominantly focuses on developing methods that work well for English despite the many positive benefits of working on other languages. … Technology cannot be accessible if it is only available for English speakers with a standard accent. What language you speak determines your access to information, education, and even human connections. Even though we think of the Internet as open to everyone, there is a digital language divide between dominant languages (mostly from the Western world) and others. Only a few hundred languages are represented on the web and speakers of minority languages are severely limited in the information available to them.”[4] Many computer scientists, like Blake Yan, an AI researcher from Beijing, believe multi-modal efforts will eventually lead to sophisticated artificial general intelligence. He explains, “These sophisticated models, trained on gigantic data sets, only require a small amount of new data when used for a specific feature because they can transfer knowledge already learned into new tasks, just like human beings. Large-scale pre-trained models are one of today’s best shortcuts to artificial general intelligence.”[5]

China’s WuDao 2.0 Natural Language Processing Model

Tech reporter Andrew Tarantola (@Terrortola) reports, “When Open AI’s GPT-3 model made its debut in May of 2020, its performance was widely considered to be the literal state of the art. Capable of generating text indiscernible from human-crafted prose, GPT-3 set a new standard in deep learning. But oh what a difference a year makes. Researchers from the Beijing Academy of Artificial Intelligence announced on [1 June 2021] the release of their own generative deep learning model, WuDao, a mammoth AI seemingly capable of doing everything GPT-3 can do, and more.”[6] Tech journalist Coco Feng (@CocoF1026) adds, “The WuDao 2.0 model is a pre-trained AI model that uses 1.75 trillion parameters to simulate conversational speech, write poems, understand pictures and even generate recipes. … Parameters are variables defined by machine learning models. As the model evolves, parameters are further refined to allow the algorithm to get better at finding the correct outcome over time. Once a model is trained on a specific data set, such as samples of human speech, the outcome can then be applied to solving similar problems. In general, the more parameters a model contains, the more sophisticated it is.”[7]

If the number of parameters being used is the metric for sophistication, Tarantola says WuDao 2.0 far surpasses other systems in sophistication. He explains, “[WuDao 2.0’s parameters are] ten times larger than the 175 billion GPT-3 was trained on and 150 billion parameters larger than Google’s Switch Transformers.” According to Feng, “WuDao 2.0 covers both Chinese and English with skills acquired by studying 4.9 terabytes of images and texts, including 1.2 terabytes each of Chinese and English texts.” Tarantola indicates WuDao 2.0 is no one-trick pony. He explains, “Unlike most deep learning models which perform a single task — write copy, generate deep fakes, recognize faces, win at Go — WuDao is multi-modal. … BAAI researchers demonstrated WuDao’s abilities to perform natural language processing, text generation, image recognition, and image generation tasks. … The model can not only write essays, poems and couplets in traditional Chinese, it can both generate alt text based off of a static image and generate nearly photorealistic images based on natural language descriptions.”

Concluding Thoughts

America (and its western allies) are in race with China in the areas of artificial intelligence and quantum computing. Although the U.S. still holds the lead, studies indicate that lead is narrowing. Feng reports, “A March report by the US National Security Commission on Artificial Intelligence, which includes former Google CEO Eric Schmidt as a chairman along with representatives from other major tech firms, identified China as a potential threat to American AI supremacy. The Rand Corporation think tank also warned last year that Beijing’s focus on AI has helped it substantially narrow its gap with the US, attributing the country’s ‘modest lead’ to its dominant semiconductor sector.” Multimodal AI is one area where China is making great strides and may even have pulled ahead.

Footnotes

[1] Louis-Philippe Morency and Tadas Baltrušaitis, “Tutorial on Multimodal Machine Learning” Carnegie Mellon University, 2017.

[2] Pragati Baheti, “Introduction to Multimodal Deep Learning, Heartbeat, 27 February 2020.

[3] Kyle Wiggers, “The immense potential and challenges of multimodal AI,” Venture Beat, 30 December 2020.

[4] Sebastian Ruder, “Why You Should Do NLP Beyond English,” Sebastian Ruder Website, 1 August 2020.

[5] Coco Feng, “US-China tech war: Beijing-funded AI researchers surpass Google and OpenAI with new language processing model,” South China Morning Post, 2 June 2021.

[6] Andrew Tarantola, “China’s gigantic multi-modal AI is no one-trick pony,” Engadget, 2 June 2021.

[7] Feng, op. cit.