Nowadays, few people question the value of data. Yossi Sheffi (@YossiSheffi), Director of the MIT Center for Transportation & Logistics, goes so far as to assert data is an organization’s most valuable asset. He writes, “The well-worn adage that a company’s most valuable asset is its people needs an update. Today, it’s not people but data that tops the asset value list for companies.”[1] If data is indeed a company’s most valuable asset, then business leaders need to understand what makes data valuable. You often read about the four “V’s” of big data. For example, Julius Černiauskas (@juliuscern), CEO at Oxylabs, writes, “Big data is often differentiated by the four V’s: velocity, veracity, volume and variety. Researchers assign various measures of importance to each of the metrics, sometimes treating them equally, sometimes separating one out of the pack.”[2] And journalist Sunil Yadav writes, “When data scientists capture and analyze data, they discover that it is categorized into four sections. They are the four Vs of Big Data.”[3]

Almost everyone agrees that those four V’s give definition to the term “big data”: volume describes the amount of data (there’s lots of it); velocity describes how quickly it’s generated (at an amazing pace); variety describe data’s many forms; and veracity describes the importance of quality data (not all data is accurate). As Sheffi noted above, however, there is a fifth “V”: value (data is often described as the new oil). As the value of data became apparent, attacks on that data followed requiring a sixth “V” to be added: vulnerability (database breaches occur on a regular basis). Then, following the 2016 presidential election, it was revealed that a company called Cambridge Analytica used personal data to manipulate voters. As journalist Nick Ismail (@ishers123) writes, “When huge amounts of data on individuals are aggregated into one place, it becomes possible to use that data to manipulate the individual.”[4] This revealed the need for another “V”: virtue (the ethical use of data).

Although some pundits have identified even more “V’s”, having seven “V’s” define and elaborate the importance of big data seems appropriate. Journalist Anna Pukas insists seven is the world’s favorite number. She writes, “Pick a number, any number. Chances are it will be seven. Whatever your creed or culture the number seven is special.”[5] There are seven colors in a rainbow, seven deadly sins, and the Bible claims it took God seven days to create the world. So I’ll concentrate on those seven “V’s.” If you’re curious about what other “V’s” have been suggested, George Firican (@georgefirican), director of data governance and business intelligence at the University of British Columbia, suggests a few four more Vs to the list: Variability, Validity, Volatility, and Visualization.[6]

The Seven Big Data V’s

1. Volume

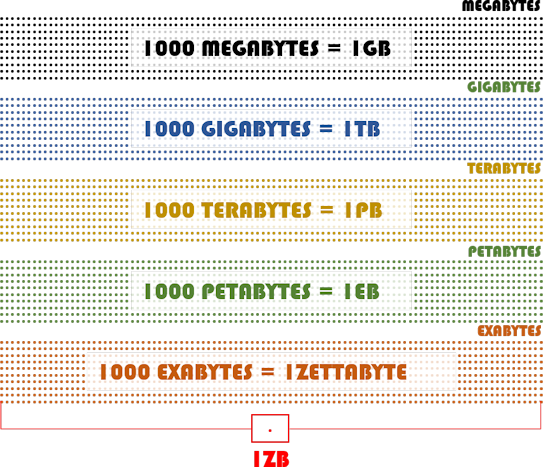

Černiauskas writes, “While there have been calculations on the number of petabytes of content produced online every day, we might as well treat the total volume of big data as infinite. … The production of data happens around the clock, and it keeps growing exponentially.” Volume is the most significant characteristic of Big Data. How big is big? In 2016, the world surpassed the zettabyte mark of generated data. The following graphic can help you understand how enormous that amount of data is.

2. Variety

Firican notes, “When it comes to big data, we don’t only have to handle structured data but also semistructured and mostly unstructured data as well. … Most big data seems to be unstructured, but besides audio, image, video files, social media updates, and other text formats there are also log files, click data, machine and sensor data, etc.” And Černiauskas adds, “While new data types aren’t invented, at least on a large scale, there’s always the possibility to go more granular with variety.”

3. Velocity

Brent Dykes (@analyticshero), Director of Data Strategy at Domo, writes, “The volume and variety aspects of Big Data receive the lion’s share of attention. However, you should consider taking a closer look at the velocity dimension of Big Data — it may have a bigger impact on your business than you think. … Velocity underscores the need to process the data quickly and, most importantly, use it at a faster rate than ever before.”[7] In other words, velocity not only refers to how quickly data is generated but also refers to the importance of data’s timeliness. Yadav explains, “At times, even a couple of minutes may seem too late. Some processes, such as data fraud detection, may be time-sensitive. In such instances, data needs analyzed and used as it streams into the enterprise to maximize its value.”

4. Veracity

The importance of data quality can’t be overstressed. One of my favorite headlines is: “If you put garbage in, do you get machine learning out?”[8] Anil Somani, author of that article, writes, “It’s important to establish policies and processes that maintain a high level of data consistency and cleanliness, otherwise companies will find the quality of their analytics will degrade as the quality of their data degrades. When that happens, it opens the door to a sub-optimal decision-making process and has an adverse impact on clients.” The staff at GutCheck insists veracity is the most important of all the V’s. They explain, “There is one ‘V’ that we stress the importance of over all the others — veracity. Data veracity is the one area that still has the potential for improvement and poses the biggest challenge when it comes to big data. With so much data available, ensuring it’s relevant and of high quality is the difference between those successfully using big data and those who are struggling to understand it.”[9]

5. Value

Trying to decide which “V” is most important is a fool’s errand. Nevertheless, Firican suggests “value” may be “the most important of all” big data characteristics. He adds, “The other characteristics of big data are meaningless if you don’t derive business value from the data.” Value is important, but without cognitive technologies (aka artificial intelligence) that value remains locked inside databases with few ways to escape.

6. Vulnerability

Vulnerability primarily addresses the fact that hackers are constantly trying to breach large databases and steal the information they contain. Bank robbers rob banks because that’s where the money is. Hackers hack databases because that’s where the data is. When databases containing personal data are breached, corporate reputations can be damaged. Most companies, however, are concerned about the financial damages caused by data breaches. A recent report from IBM Security found 83% percent of surveyed organizations have experienced more than one data breach and each succeeding data breach has been more costly.[10] The study concludes data breaches have reached an all-time high average cost of $4.35 million per breach.

7. Virtue

Journalist James Spillane writes, “It’s time to talk about the integrity of data and ask, is the usage of this data moral?”[11] Lisa Morgan (@lisamorgan) adds, “The amount of data being collected about people, companies, and governments is unprecedented. What can be done with that data is downright frightening.”[12] Futurist Bernard Marr (@BernardMarr) suggests doing the right thing with data is good for everyone. He explains, “At the moment there is undeniably a perception that corporations and public bodies can be evasive or secretive about the data they collect and how they use it. Adopting a pro-active policy of transparency doesn’t just increase trust, it gives an opportunity for organizations to open another conversation about the value that their services can bring.”[13]

Concluding Thoughts

There is no denying that the lingering pandemic, snarled supply chains, confrontational geo-politics, and rising inflation have set the world back a bit. Regardless, Dimitri Sirota (@dimitrisirota), CEO and co-founder of BigID, believes that world economy will rebound — even roar — thanks to the value created by big data. He explains, “This time around, the Roaring 20’s will bring about not mass manufacturing and increased migration to cities, as it did a century ago. It will bring the power of context to the concept of data discovery with automated analytics. … But before they can see returns, they must first understand that in this century, data is the foundation for all financial progress and business innovation. And if you don’t know what data you have, you’ll never know the benefits that data can bring.”[14] Business leaders not only need to know what data they have they need to understand the seven V’s that define big data and its importance.

Footnotes

[1] Yossi Sheffi, “What is a Company’s Most Valuable Asset? Not People,” LinkedIn, 19 December 2018.

[2] Julius Černiauskas, “Understanding The 4 V’s Of Big Data,” Forbes, 23 August 2022.

[3] Sunil Yadav, “The Complete Guide to the 4 V’s of Big Data,” Baseline, 18 August 2022.

[4] Nick Ismail, “What the Cambridge Analytica scandal means for big data,” Information Age, 29 March 2018.

[5] Anna Pukas, “The magnificent 7: The meaning and history behind the world’s favourite number,” Express, 10 April 2014.

[6] George Firican, “The 10 Vs of Big Data,” Upside, 8 February 2017.

[7] Brent Dykes, “Big Data: Forget Volume and Variety, Focus On Velocity,” Forbes, 28 June 2017.

[8] Anil Somani, “If you put garbage in, do you get machine learning out?” Altimetrik, 30 July 2019.

[9] Staff, “Veracity: The Most Important ‘V’ of Big Data,” GutCheck, 29 August 2019.

[10] Staff, “Global average cost of a data breach reached an all-time high of $4.35 million: Report,” ET CIO, 28 July 2022.

[11] James Spillane, “The New “V” for Big Data: Virtue,” Business 2 Community, 18 January 2018.

[12] Lisa Morgan, “14 Creepy Ways To Use Big Data,” InformationWeek, 30 October 2015.

[13] Bernard Marr, “Big Data: The 6th ‘V’ Everyone Should Know About,” Forbes, 20 December 2016.

[14] Dimitri Sirota, “In the Roaring 2020s, Data Will Be Your Most Vital Asset,” insideBIGDATA, 20 November 2021.