“Pick a number, any number,” writes Anna Pukas. “Chances are it will be seven. Whatever your creed or culture the number seven is special. … There’s a reason why Yul Brynner, Charles Bronson and the other gunfighters in the 1960 film were magnificent and that’s because there were seven of them.”[1] There are seven colors in a rainbow and seven deadly sins. The Bible claims it took God seven days to create the world. Why? Because, for many ancient people, seven represented completeness or perfection. It should, therefore, come as no surprise a seventh “V” has been added to the characteristics of big data. To help explain why Big Data was different from historical data, people talked about three “Vs” — Volume (there is lots of it), Velocity (it is generated at an amazing pace), and Variety (it comes in structured and unstructured forms). Soon a fourth “V” was added — Veracity (not all data is accurate) Then a fifth “V” was added — Value (data is the new gold). Cyber attacks required a sixth “V” — Vulnerability (database breaches occur on a regular basis). Finally, James Spillane suggests a seventh “V” be added to the list — Virtue (the ethical use of big data).[2] It would be great if people recognized the list of Big Data “Vs” as complete at seven; but, George Firican (@georgefirican), director of data governance and business intelligence at the University of British Columbia, wants to add four more Vs to the list: Variability, Validity, Volatility, and Visualization.[3] I’ll stick with seven for the time being.

Volume

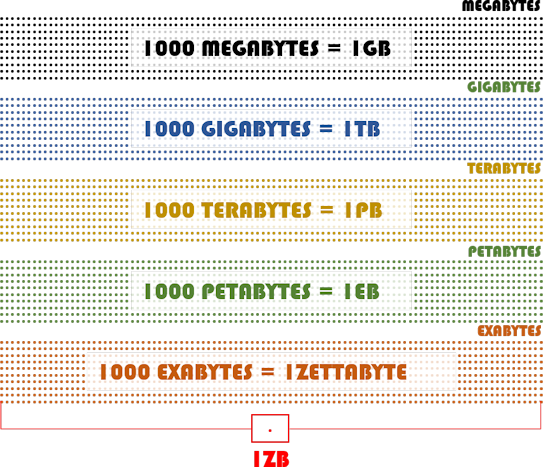

Steve Lohr (@SteveLohr) credits John Mashey, who was the chief scientist at Silicon Graphics in the 1990s, with coining the term Big Data.[3] Lohr asserts the term refers not only to “a lot of data, but different types of data handled in new ways.”[4] While that may be true, one can’t ignore the fact that volume is the most significant characteristic of Big Data. The volume of data being created is historical and will only increase. The Internet of Things (IoT) is going to generate a massive amount of data. How much? “Annual global IP traffic [surpassed] the zettabyte (1000 exabytes) threshold by the end of 2016,” reports Víctor Vilas (@victorvilasmatz), Business Development Manager Europe for AndSoft, “and will reach 2 zettabytes per year by 2019.”[5] What Vilas is really saying is that the era of Big Data is over. Why? Because calling a zettabyte of data “big” doesn’t really conjure up the right picture of just how really, really big a zettabyte of data is. The following graphic provides you with some indication of how large a zettabyte really is.

Velocity

Brent Dykes (<ahref=”https://twitter.com/analyticshero” target=”_blank” rel=”noopener”>@analyticshero), Director of Data Strategy at Domo, writes, “In terms of the three V’s of Big Data, the volume and variety aspects of Big Data receive the lion’s share of attention. However, you should consider taking a closer look at the velocity dimension of Big Data — it may have a bigger impact on your business than you think. … Velocity underscores the need to process the data quickly and, most importantly, use it at a faster rate than ever before.”[6]

Variety

Firican notes, “When it comes to big data, we don’t only have to handle structured data but also semistructured and mostly unstructured data as well. … Most big data seems to be unstructured, but besides audio, image, video files, social media updates, and other text formats there are also log files, click data, machine and sensor data, etc.”

Veracity

“Data Veracity (i.e., [the presence of] uncertain or imprecise data),” writes Michael Walker, “is often overlooked yet may be as important as the 3 V’s of Big Data: Volume, Velocity and Variety.”[7] He calls data with uncertain veracity “data in doubt.” “Uncertainty,” he writes, “[is] due to data inconsistency and incompleteness, ambiguities, latency, deception, [and/or] model approximations.”

Value

Firican argues value may be “arguably the most important of all” big data characteristics. He adds, “The other characteristics of big data are meaningless if you don’t derive business value from the data.” Kristian J. Hammond (@KJ_Hammond), chief scientist of Narrative Science and a professor of computer science and journalism at Northwestern University, argues counterintuitively the value of big data isn’t the data. He explains, “If we’re going to really capitalize on Big Data, we need get to human insight at machine scale. We will need systems that not only perform data analysis, but then also communicate the results that they find in a clear, concise narrative form.”[8] That’s exactly why cognitive computing platforms are being developed.

Vulnerability

Vulnerability primarily addresses the fact that hackers are constantly trying to breach large databases and steal the information they contain. Bank robbers rob banks because that’s where the money is. Hackers hack databases because that’s where the data is. Bernard Marr (@BernardMarr) sees another dimension of vulnerability as well. He observes, “Vulnerability addresses the fact that a growing number of people are becoming switched on to the fact that their personal data — the lifeblood of many commercial Big Data initiatives — is being gobbled up by the gigabyte, used to pry into their behavior and, ultimately, sell them things.”[9]

Virtue

Stirrup writes, “It’s time to talk about the integrity of data and ask, is the usage of this data moral?” Lisa Morgan (@lisamorgan) adds, “The amount of data being collected about people, companies, and governments is unprecedented. What can be done with that data is downright frightening.”[10] Marr suggests doing the right thing with data is good for everyone. He explains, “At the moment there is undeniably a perception that corporations and public bodies can be evasive or secretive about the data they collect and how they use it. Adopting a pro-active policy of transparency doesn’t just increase trust, it gives an opportunity for organizations to open another conversation about the value that their services can bring.”

Summary

Gaining the greatest possible benefits of big data requires companies to understand each of the characteristics associated with enormous databases and their potential use. Companies especially need to understand they have an obligation to protect personal data and use it ethically. If they don’t society as whole will eventually pay a high cost one way or another.

Footnotes

[1] Anna Pukas, “The magnificient 7: The meaning and history behind the world’s favourite number,” Express, 10 April 2014.

[2] James Spillane, “The New “V” for Big Data: Virtue,” Business 2 Community, 18 January 2018.

[3] George Firican, “The 10 Vs of Big Data,” Upside, 8 February 2017.

[4] Steve Lohr, “The Origins of ‘Big Data’: An Etymological Detective Story,” The New York Times, 1 February 2013.

[5] Víctor Vilas, “Welcome to the era zettabyte thanks to the internet of things,” AndSoft Blog, 21 September 2015.

[6] Brent Dykes, “Big Data: Forget Volume and Variety, Focus On Velocity,” Forbes, 28 June 2017.

[7] Michael Walker, “Data Veracity,” Data Science Central, 28 November 2012.

[8] Kristian J. Hammond, “The Value of Big Data Isn’t the Data,” Harvard Business Review, 1 May 2013.

[9] Bernard Marr, “Big Data: The 6th ‘V’ Everyone Should Know About,” Forbes, 20 December 2016.

[10] Lisa Morgan, “14 Creepy Ways To Use Big Data,” InformationWeek, 30 October 2015.