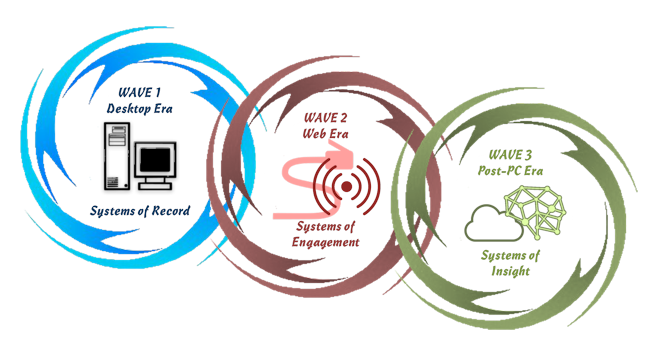

According to Matt Sanchez (@MattSanchez), founder and CTO of Cognitive Scale, “Cognitive Computing is the most significant disruption in the evolution of computing since the industry stopped using punched cards in the 1950s.”[1] That’s a bold statement; especially since the term cognitive computing refers to a number of different approaches. Cognition is defined as “the action or process of acquiring knowledge and understanding through thought, experience, and the senses.” Of course, that definition has to be modified slightly when applied to a thinking machine. At Enterra Solutions®, we define a cognitive system as one that discovers insights and relationships through analysis, machine learning, and sensing. Sanchez defines cognitive computing as “third generation of computing involving feedback driven self-learning software systems that interpret and learn from multi-structured Big Data (numbers, text, image, audio, video). They use techniques such as natural language processing, spatial navigation, machine vision, machine learning and pattern recognition to learn a domain based on the best available data and get better and more immersive with time.” As Sanchez’ definition notes, he believes that there have been two IT waves that have preceded what he calls the “Post-PC Era.” They are the Desktop Era and the Web Era. The reason he makes such bold predictions about cognitive computing in the Post-PC Era is because he perceives them (as do I) as “Systems of Insight.”

John Gordon, vice president of IBM Watson Solutions, agrees with Sanchez that we are entering a third IT era. According to Gordon, the first two eras were “the tabulation era and the programmable computer era. Now, he says, we’re beginning to embark on a third: the era of cognitive computing.”[2] Gordon went on to predict, “I truly believe that the cognitive computing era will go on for the next 50 years and will be as transformational for industry as the first types of programmable computers were in the 1950s,” That Sanchez and Gordon think alike is no coincidence since Sanchez’ background links him to the cognitive computing approach used by IBM’s Watson system. Watson basically uses a brute force approach to cognitive analytics. It analyzes massive amounts of data and provides a “best guess” answer (IBM calls it a “confidence-weighted response”) based on what it finds. That’s how Watson beat human champions on the game show Jeopardy! This brute force approach is often called deep learning.

At Enterra, we take a different approach to cognitive computing. We promote our Cognitive Reasoning Platform™ (CRP) as a system that can Sense, Think, Act, and Learn®. The CRP also uses various techniques to overcome the challenges associated with most deep learning systems. Like deep learning systems, the CRP gets smarter over time and self-tunes by automatically detecting correct and incorrect decision patterns; but, the CRP also bridges the gap between a pure mathematical technique and semantic understanding. The CRP has the ability to do math, but also understands and reasons about what was discovered. Marrying advanced mathematics with a semantic understanding is critical — we call this “Cognitive Reasoning.” The Enterra CRP cognitive computing approach — one that utilizes the best (and multiple) solvers based on the challenge to be solved and has the ability to interpret those results semantically — is a superior approach for many of the challenges to which deep learning is now being applied.

All cognitive computing systems provide value-added beyond traditional computing methods. Sanchez notes that “cognitive analytics extends Big Data analytics in three ways,” Cognitive analytics are:

1. Context driven: The use of context driven dynamic algorithms for automating pattern discovery and knowledge.

2. Hypothesis oriented: Generate alternative answers with evidence parsed and inferred from disparate data.

3. Learning based: Reason and learn instantly and incrementally to improve over time without direct modeling.

Deloitte analysts Rajeev Ronanki (@RajeevRonanki) and David Steier (@dsteier) write, “Cognitive analytics offers a way to bridge the gap between big data and the reality of practical decision making.”[3] Practical decision making is probably the biggest reason that Sanchez’ claim that cognitive computing is going to a disruptive force is going to prove accurate. In fact, Ginni Rometty, the Chairman and CEO of IBM, told participants at an IBM-sponsored conference, “In the future, every decision that mankind makes is going to be informed by a cognitive system like Watson, and our lives will be better for it.”[4] Accenture analysts agree with Sanchez and call cognitive computing “the ultimate long-term solution” for many of businesses’ most nagging challenges.[5] Bain analysts, Michael C. Mankins and Lori Sherer (@lorisherer), assert that if you can improve a company’s decision making you can dramatically improve its bottom line.[6] They explain:

“The best way to understand any company’s operations is to view them as a series of decisions. People in organizations make thousands of decisions every day. The decisions range from big, one-off strategic choices (such as where to locate the next multibillion-dollar plant) to everyday frontline decisions that add up to a lot of value over time (such as whether to suggest another purchase to a customer). In between those extremes are all the decisions that marketers, finance people, operations specialists and so on must make as they carry out their jobs week in and week out. We know from extensive research that decisions matter — a lot. Companies that make better decisions, make them faster and execute them more effectively than rivals nearly always turn in better financial performance. Not surprisingly, companies that employ advanced analytics to improve decision making and execution have the results to show for it.”

IBM consistently emphasizes that the future is going to be characterized by humans and cognitive computers working together. As one of the experts in the following video states, when you listen to a violinist it’s really the violin that is making the sound; but, the beauty of the sound depends on the talent of the violinist. Together the violin and the musician can do something that neither of them can do on their own.

Like other experts in the field, Ronanki and Steier believe that cognitive systems are going to allow humans to push the boundaries that have previously limited their analysis. They write:

“Today, analytical systems that enable better data-driven decisions are at a crossroads with respect to where the work gets done. While they leverage technology for data-handling and number-crunching, the hard work of forming and testing hypotheses, tuning models, and tweaking data structures is still reliant on people. Much of the grunt work is carried out by computers, while much of the thinking is dependent on specific human beings with specific skills and experience that are hard to replace and hard to scale. … Cognitive analytics can push past the limitations of human cognition, allowing us to process and understand big data in real time, undaunted by exploding volumes of data or wild fluctuations in form, structure, and quality. Context-based hypotheses can be formed by exploring massive numbers of permutations of potential relationships of influence and causality — leading to conclusions unconstrained by organizational biases.”

The Post-PC era that has been ushered in by cognitive computing will likely last until a practical quantum computer is built. Even then, many of the techniques now being created will be transferable to quantum machines.

Footnotes

[1] Matt Sanchez, “Cognitive Computing and Next Gen AI – A Four Part Series,” Cognitive Scale, April 2015.

[2] John Gordon, “The third era of IT,” The Economist Intelligence Unit.

[3] Rajeev Ronanki and David Steier, “Cognitive Analytics,” Deloitte University Press, 21 February 2014.

[4] Lauren F. Friedman, “The CEO of IBM just made a jaw-dropping prediction about the future of artificial intelligence,” Business Insider, 15 May 2015.

[5] Pierre Nanterme and Paul Daugherty, “From Digitally Disrupted to Digital Disrupter,” 2014.

[6] Michael C. Mankins and Lori Sherer, “Creating value through advanced analytics,” Bain Brief, 11 February 2015.